[CVPR2025]Decouple Distortion from Perception: Region Adaptive Diffusion for Extreme-low Bitrate Perception Image Compression

Published:

Abstract

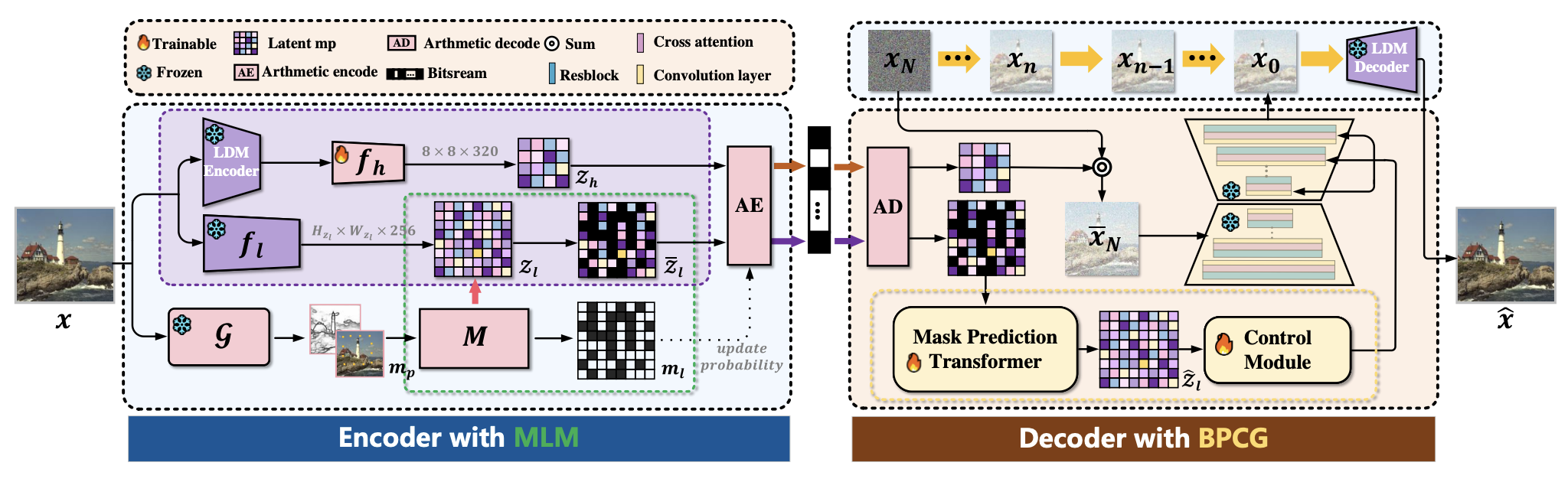

Generative image compression leverages the generative capabilities of diffusion models to achieve excellent perceptual fidelity at extreme-low bitrates. However, existing methods overlook the non-uniform complexity of images, making it difficult to balance global perceptual quality with local texture consistency and to achieve efficient allocation of coding resources. To address this issue, this paper proposes the Map-guided Masked Realistic Image Diffusion Codec (MRIDC), which aims to optimize the trade-off between local distortion and global perceptual quality in extreme-low bitrate compression. MRIDC integrates a vector-quantized image encoder with a diffusion-based decoder. At the encoding stage, the Map-guided Latent Masking (MLM) module enables adaptive resource allocation based on image complexity. At the decoding stage, the Bidirectional Prediction Controllable Generation (BPCG) module completes masked latent variables and reconstructs images. Experimental results demonstrate that MRIDC achieves state-of-the-art (SOTA) perceptual compression quality at extreme-low bitrates, effectively preserving feature consistency in key regions, advancing the perceptual rate-distortion performance curve, and establishing a new benchmark for balancing compression efficiency and visual fidelity.

Introduction / Background

In scenarios such as the Internet of Things (IoT), edge computing, and real-time visual transmission, extreme-low bitrate image compression has become a core requirement. It not only needs to achieve high compression ratios to save transmission and storage resources but also ensure the perceptual quality of images and feature consistency in key regions. Generative diffusion models have brought new breakthroughs to extreme-low bitrate image compression, significantly improving the perceptual fidelity of compressed images. However, existing diffusion-based compression methods have a core flaw: treating images as uniform entities for encoding and reconstruction, ignoring the complexity differences in different regions of images.

This flaw leads to unbalanced allocation of coding resources—simple regions occupy excessive resources while complex key regions lack sufficient resources. Ultimately, this results in acceptable global perceptual quality but local texture distortion and loss of key features, making it difficult to meet the requirements of visual perception tasks for image details. Meanwhile, there is still room for optimization in the rate-distortion perception trade-off of existing methods, and the precise balance between compression efficiency and visual fidelity has not yet been achieved.

Targeting researchers, engineers, and practitioners in the fields of image compression, computer vision, and multimedia processing, this paper proposes a region-adaptive diffusion-based image compression framework to solve the problems of uneven resource allocation and local distortion in existing methods, providing a new solution for extreme-low bitrate perceptual image compression. This work is published at CVPR 2025, representing a top conference research achievement in the field of generative image compression.

Methodology / Main Content

1. Problem Definition

This research focuses on the perceptual image compression problem at extreme-low bitrates. The core objective is to address the issues of inefficient coding resource allocation, local texture distortion, and inconsistent key region features in existing diffusion-based generative compression methods caused by ignoring the non-uniform complexity of images. We aim to achieve triple optimization of global perceptual quality, local texture consistency, and compression efficiency, thereby improving the comprehensive perceptual rate-distortion performance.

2. Solution Approach / Theoretical Derivation

We propose the Map-guided Masked Realistic Image Diffusion Codec (MRIDC), whose core idea is to decouple distortion and perceptual quality in image compression through region-adaptive coding resource allocation and constrained diffusion reconstruction, achieving a precise trade-off between them. The overall architecture is a joint design of a vector-quantized encoder + diffusion-based decoder, with core modules including:

- Map-guided Latent Masking (MLM) Module (Encoding Stage): Based on prior information of image complexity, selectively masks the latent space to retain more latent variable information for complex/key regions and mask more redundant information for simple regions, realizing adaptive allocation of coding resources and improving resource utilization efficiency;

- Bidirectional Prediction Controllable Generation (BPCG) Module (Decoding Stage): Adds constraint guidance to the generation process of the diffusion model, bidirectionally predicts and completes masked latent variables based on unmasked latent variable information, achieves constrained image reconstruction, and ensures local texture consistency and fidelity of key features.

3. Experimental Setup / Implementation Details

- Experimental Benchmarks: Experiments are conducted on mainstream public datasets for extreme-low bitrate image compression, comparing with current SOTA generative image compression methods and traditional compression methods;

- Evaluation Metrics: Comprehensive evaluation from three dimensions: perceptual quality (e.g., LPIPS, SSIM, subjective MOS scores), rate-distortion performance (RD curves), and key region feature consistency;

- Core Verification: Verify the region-adaptive resource allocation effect, local texture reconstruction capability, and generalization performance of MRIDC at different extreme-low bitrates.

4. Results and Analysis

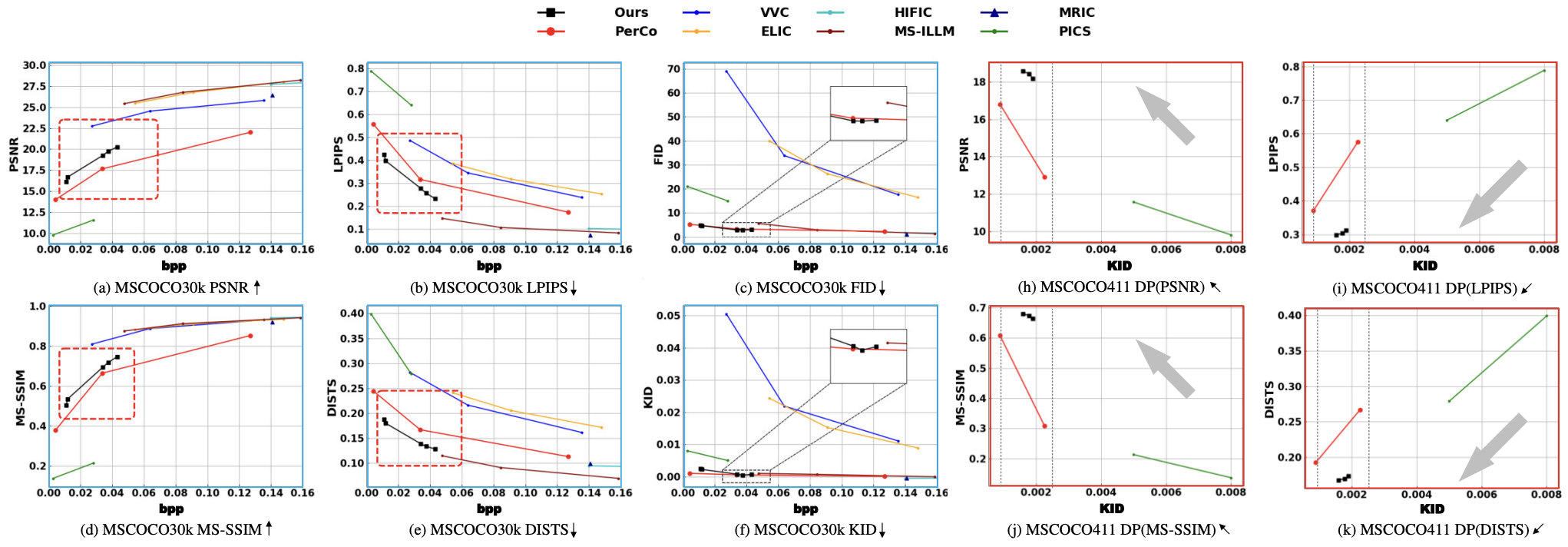

- SOTA Performance Verification: MRIDC achieves state-of-the-art perceptual compression quality at extreme-low bitrates, significantly outperforming comparison methods in objective metrics such as LPIPS, SSIM, and subjective MOS scores;

- Key Region Fidelity: Through region-adaptive resource allocation and constrained reconstruction, the model effectively preserves feature consistency in key image regions, solving the problems of local texture distortion and feature loss in existing methods;

- Rate-Distortion Perception Optimization: The model significantly advances the perceptual rate-distortion performance curve, achieving higher perceptual quality at the same bitrate and lower bitrate at the same perceptual quality, establishing a new industry benchmark for balancing compression efficiency and visual fidelity;

- Module Effectiveness: Ablation experiments verify the necessity of the core modules MLM and BPCG. Removing either module leads to decreased resource allocation efficiency, reduced perceptual quality, and lower local consistency.

Conclusion and Outlook

Key Contributions

- Proposed the Map-guided Masked Realistic Image Diffusion Codec (MRIDC), integrating region-adaptive resource allocation into diffusion-based generative image compression for the first time, decoupling distortion and perceptual quality in compression, and solving the core problem of uneven resource allocation in existing methods;

- Designed dedicated modules MLM (encoding stage) and BPCG (decoding stage), realizing end-to-end optimization from latent variable masking to constrained reconstruction, ensuring dual improvement of global perceptual quality and local texture consistency at extreme-low bitrates;

- Published at CVPR 2025, MRIDC achieves SOTA performance in extreme-low bitrate perceptual image compression, advancing the perceptual rate-distortion performance curve and establishing a new benchmark for balancing compression efficiency and visual fidelity.

Personal Notes

It was not noticed at that time that a series of subsequent visual tokenizers all adopted similar dual-encoder structures. Unfortunately, only compression and reconstruction were explored at that time, without investigating the generation aspect.